Can AI Think Outside the Map?

Artificial intelligence is getting very good at answering questions.

It can write essays, generate images, discover proteins, debug code, diagnose disease. Each week brings another announcement that sounds, at least on the surface, like a boundary being crossed.

People keep reaching for the same phrase: this changes everything.

But beneath the excitement, there’s a quieter question—one that’s harder to phrase, and easier to ignore.

If AI can do so much inside our existing frameworks of knowledge, will it also be able to generate the frameworks themselves?

Put differently: can it think outside the map?

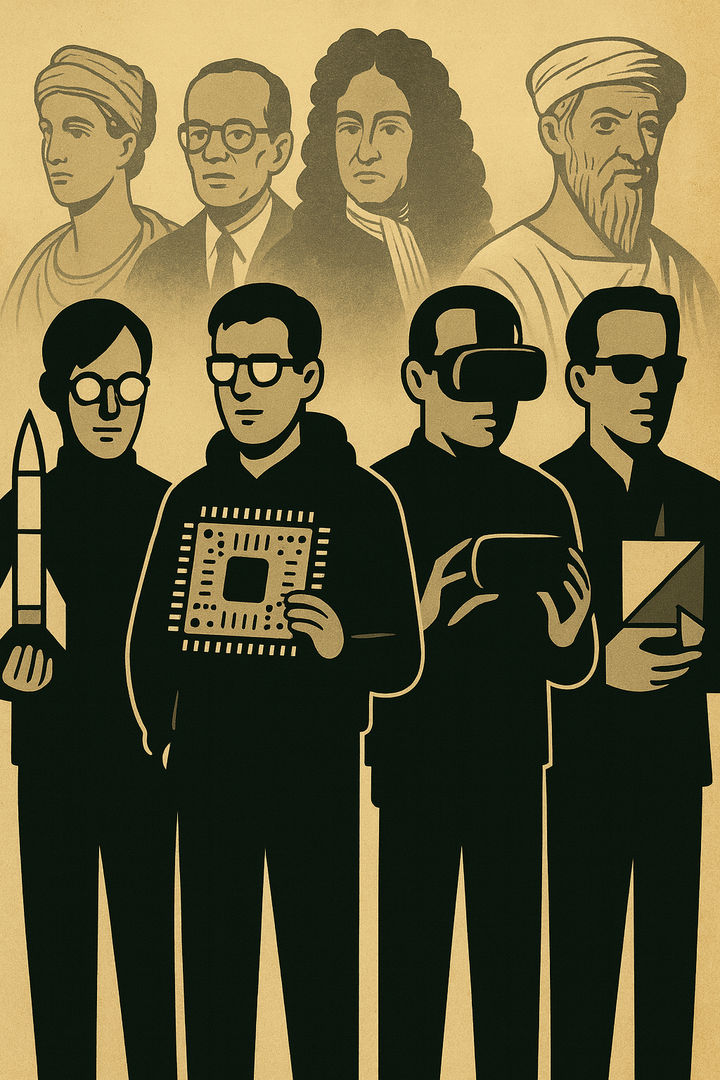

That question matters more than it first appears. The changes that genuinely reshaped history rarely came from better answers to familiar problems. They came from moments when it became clear that the problem itself had been misidentified.

Disease didn’t change when doctors refined theories of “bad air,” but when illness was understood as something transmitted by organisms no one could see. Astronomy didn’t advance because planetary motion was tracked more precisely, but because Earth was quietly displaced from the center of the picture.

Again and again, progress arrived not through refinement, but through reorientation.

What’s easy to miss is what happens when that reorientation doesn’t occur.

We keep improving our answers while repeating the same kinds of mistakes. We build more sophisticated systems to solve familiar problems and wonder why the deeper patterns don’t shift. Things change, but the shape of the difficulty remains strangely intact.

When the landscape is wrong, better answers don’t resolve the difficulty. They can even make it harder to notice.

And shifts of the kind that actually matter rarely begin where our explanations are already working.

What explanation quietly assumes

Explanation usually begins by deciding what kind of thing something is, and only then asking how it works.

This happens so quickly we rarely notice it. Before we explain, we sort—often implicitly—whether we’re dealing with a machine, a person, a system, a market, a disorder, a mind.

Once that decision is in place, explanation can proceed. Certain questions make sense. Certain answers count. Certain methods feel appropriate.

Psychology assumes a mind.

Economics assumes agents with interests.

Machine learning assumes an objective to be optimized.

These assumptions aren’t mistakes. They’re what allow inquiry to get off the ground at all. But in settling what kind of thing we’re dealing with, they also settle—quietly and usually without discussion—a more basic question: what is this thing, such that explanation applies here in the first place?

Once that question recedes, attention turns inward—toward refinement and prediction. Most of the time, that’s exactly what we want. But when it isn’t, we rarely notice why.

The blind spot before the map

Every so often, a different kind of question presses forward—one that doesn’t ask how something works, but whether our way of understanding it still holds.

Not what causes this?

But what kind of thing is this, really?

Psychology offers a familiar example. Entire fields are devoted to explaining how the mind behaves, develops, suffers, and relates to the brain. The work is often careful, rigorous, and genuinely useful.

But pause for a moment and ask a simpler question: what is a mind, anyway?

Not how it behaves.

Not what it correlates with.

But what sort of thing it is.

Despite decades of research, there’s still no shared answer. Is the mind a process? A pattern? A computation? An illusion? Each proposal redraws the map slightly—and each leaves something unresolved.

And yet explanation continues—not because of a failure, but because this is how explanation works. Once we decide what kind of thing we’re dealing with, progress becomes possible. What hasn’t been resolved doesn’t vanish; it simply fades into the background.

This is the blind spot before the map: the place where explanation depends on assumptions it does not, and often cannot, explain.

We’re trained to define things, isolate variables, build models, and refine answers. Those skills are indispensable. But they rely on something being held steady in the background.

When that background no longer fits, refinement doesn’t correct the error. It deepens it.

Pre-map thinking isn’t a rejection of explanation. It’s an attempt to notice when explanation has begun too late.

Recognition, not derivation

Insight at this level rarely arrives as a solution.

More often, it arrives as recognition.

That’s partly because many of the things we’re trying to understand here can’t be seen directly. Minds, social dynamics, and systems don’t present themselves as objects. What we encounter instead are their effects—their breakdowns, their characteristic patterns.

You begin to notice the same pattern surfacing across situations that don’t seem related at first: a person who knows what needs to be done but can’t act; a group with shared aims that keeps fracturing; a system that grows more rigid the more carefully it’s optimized.

Again and again, the same tension appears. Activity increases, but direction doesn’t.

Recognition tells us something important is missing—but it doesn’t yet tell us what.

At this level, insight also depends on reasoning from necessity—asking what must be true if these patterns keep appearing, even when the underlying structure can’t be directly observed.

Seen this way, insight comes from holding recognition and reasoning together.

The reward isn’t a conclusion. It’s orientation.

Where AI sharpens the question

Artificial intelligence is remarkably good at working inside well-defined frames. Given a goal, a representation, and a way to measure success, it can explore vast possibility spaces and outperform human intuition with ease.

In that sense, AI is a powerful accelerator.

But optimization only works once the frame is already in place. It comes after we’ve decided what kind of thing we’re dealing with, what counts as success, and what can change without losing the point.

AI doesn’t ask whether that framing still fits. It inherits it.

This is where the stakes sharpen. If the ways we currently frame our problems already lead us into familiar dead ends, automating those framings doesn’t interrupt the pattern—it stabilizes it. We get faster, more confident answers to questions that may no longer deserve our attention.

The issue isn’t that AI hits obstacles inside a problem. It’s that constraints shape the problem itself. They determine which questions can be asked, which solutions can appear, and which possibilities never enter view.

Most current AI systems don’t reason at that level. They inherit their constraints from data, architectures, and objectives supplied from outside. Within those boundaries, they can be astonishingly capable. But the boundaries themselves are not something they can step back and reconsider.

That’s why so much current debate circles around limits—scaling, alignment, interpretability. The unease isn’t simply that systems are getting smarter. It’s that they’re getting more effective while the assumptions defining what counts as success remain largely untouched.

AI can help surface those assumptions. But noticing that a constraint exists, and deciding whether it still fits, is a different kind of work.

The work that can’t be delegated

None of this requires rejecting AI, or treating it as a threat.

It requires recognizing that some kinds of thinking don’t disappear when better tools arrive. They become more important.

Systems that optimize within a frame can take us very far. But deciding when a frame itself needs to change is not something optimization naturally does. That decision tends to arise from lived tension—when progress starts to feel repetitive, when outcomes drift from intentions, when something essential keeps getting lost.

That kind of noticing depends on context and consequence. It depends on being affected by the results of our own assumptions, and having to live with what they produce.

As more decision-making is handed off to machines, the risk isn’t that thinking disappears.

It’s that the hardest part of thinking—the part that asks whether we’re even asking the right questions—quietly goes unattended.

If no one stays with that responsibility, the map doesn’t vanish.

It decides for us.

And with that, I'm going to go work on some AI automation for my business.

Comments ()